Assessing and Controlling the Risks of AI in Pharmaceutical Operations

There is a lot of hype and hyperbole around AI, including the potential of AI in the pharmaceutical industry. However, the reality of pharmaceutical manufacturing and quality control is that a single validation failure can halt a production line, trigger a regulatory inspection, or, in the worst-case scenario, contribute to a patient safety event.

The pharma industry is known for being conservative in the adoption of new technologies, but that conservatism is the product of hard experience. CSV frameworks like GAMP have existed for decades precisely because computerized systems in GxP environments – traditional computerized systems – have failed, sometimes catastrophically.

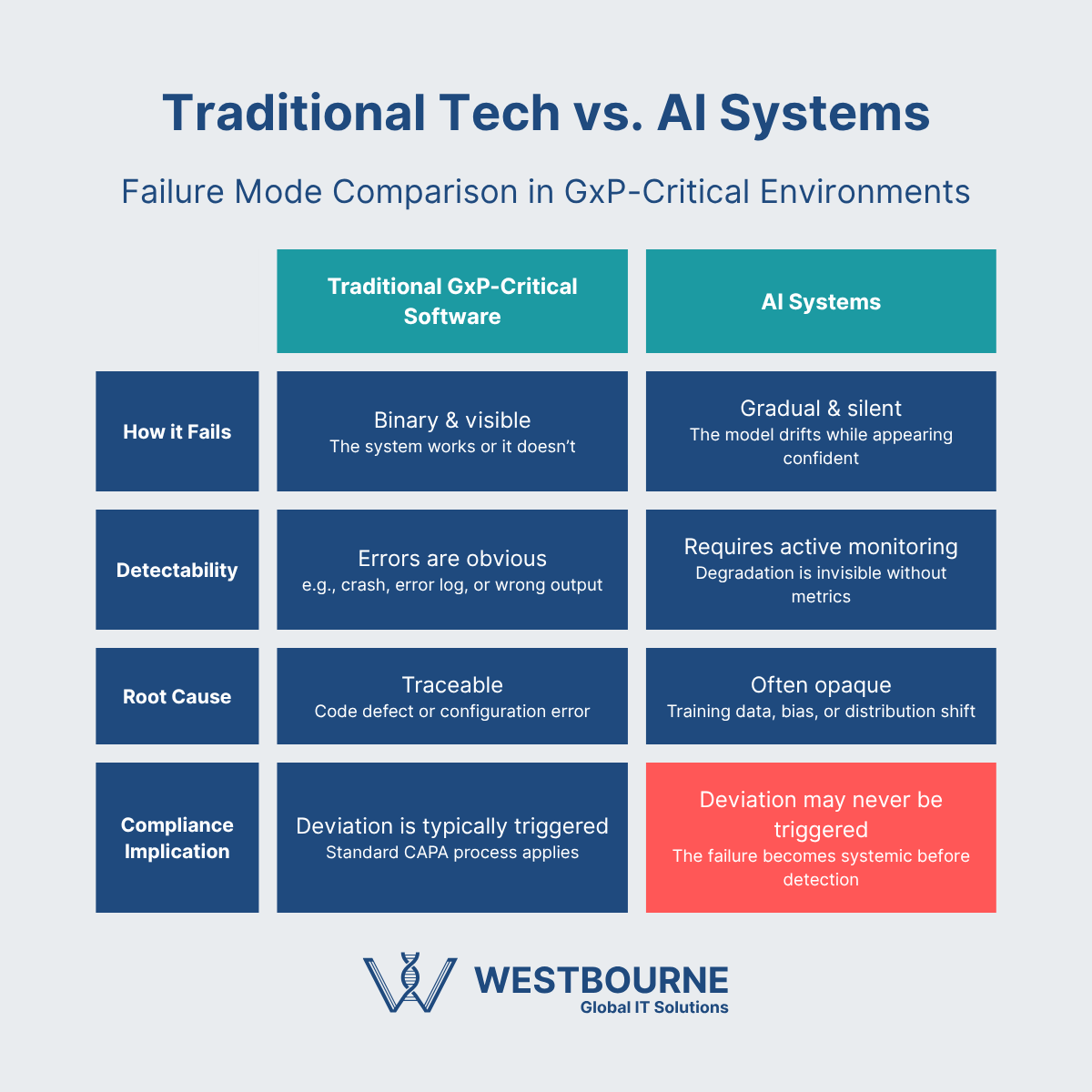

AI ramps up the potential of technology, but it also ramps up the complexities and risks. Specifically, AI technologies introduce new failure modes that are harder to detect and characterise than traditional software bugs. For example, model drift is silent, algorithmic bias can be systematically invisible, and a black-box AI technology that works 99.3% of the time can fail precisely when it matters most.

In this blog, we are going to look at the real-world risk landscape of AI technologies in relation to pharmaceutical manufacturing and quality control operations. This includes how AI technologies could potentially be adopted without creating new risk categories, as well as the tools, practices, and guidelines that still need to be developed.

AI Failure Modes and the Lack of an Established Playbook to Address Them

We have already highlighted some of the potential failure modes of AI technologies. The bigger consideration at present is the lack of an established playbook – regulatory guidance, industry best practices, etc – to address them. These are evolving, but the reality is we are in the very early days.

Some of the key issues that need to be addressed include:

- AI technologies fail differently – failures in traditional computerized systems can usually be detected, characterized, and dealt with. This is not the same with AI technologies.

- Model drift is silent – the performance of AI technologies can start to degrade immediately after they are validated due to model drift. This can be silent, as the system will continue to perform with confidence as it degrades.

- The black box problem – engineers often don’t understand why an AI technology produces the outputs that it does. This won’t cut it in the pharmaceutical industry, where transparency and explainability are essential.

- Algorithmic bias is a thing – AI models are typically trained on historical data. This means they will embed the historical biases, errors, and other problems along with the good data.

We don’t have to look far to see the problems of using AI technologies in GxP environments. The FDA’s own move into AI adoption is a salutary example. It launched Elsa (Electronic Language System Assistant) in June 2025 to help the FDA’s scientific reviewers and investigators work more efficiently. Accuracy issues, including data hallucinations and false citations, quickly emerged. And less than a year after its launch (at the time of writing), the FDA is having to move the underlying architecture of Elsa from Anthropic’s Claude model to Google’s Gemini following the Trump administration’s directive to federal agencies to stop using Claude.

An Evolving Regulatory Landscape

The regulatory landscape governing the use of AI technologies in pharmaceutical manufacturing and quality control is evolving. This means, at present, there is no single, comprehensive framework to work from. We expect that to change, but right now we are in a period of regulatory uncertainty.

This doesn’t mean pharmaceutical companies should ignore AI, as doing so creates the risk of being behind the curve once the regulatory position becomes clearer and the technologies mature. However, right now, high levels of caution and regular engagement with regulators are the most prudent approach.

Achieving Near-Term Progress with AI Technologies in Pharma

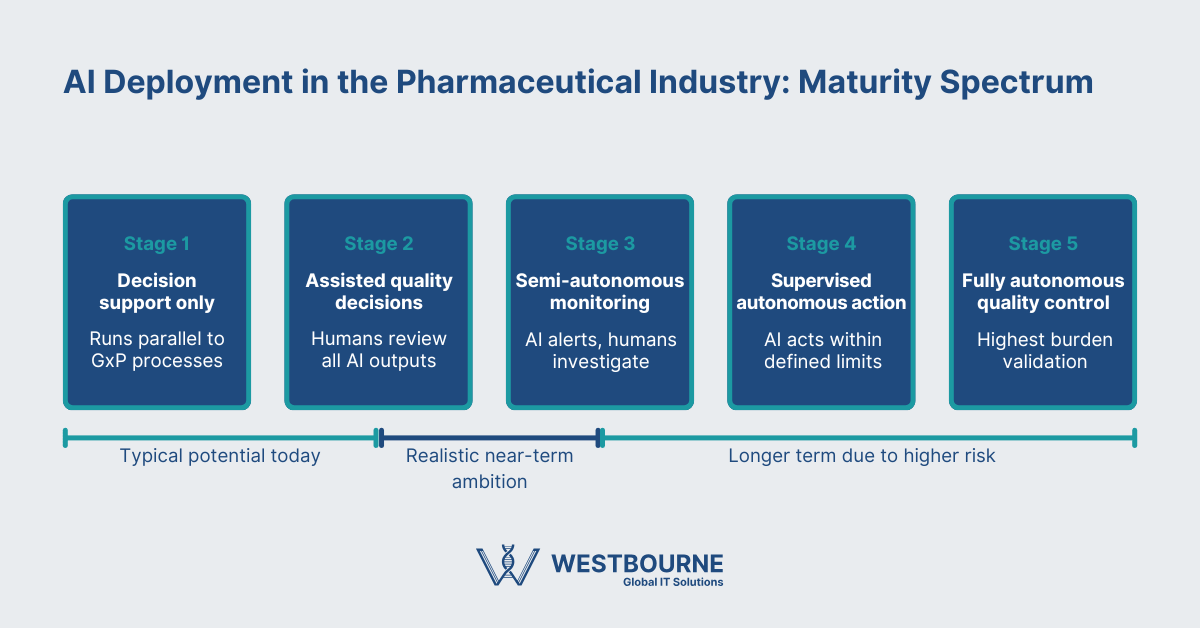

The advanced deployment of AI technologies in GxP critical environments is likely to remain rare in the short-to-medium term. As mentioned in the previous section, that doesn’t mean ignoring AI technologies. Progress could be made in areas like AI-assisted document reviews, anomaly detection, and predictive maintenance – with the appropriate controls, of course.

Even with these applications of AI technologies, assessing and controlling risk is critical. The following questions are essential starting points for understanding whether your organization is ready and the gaps that need to be overcome.

- What is the potential impact of the AI system on product quality and patient safety?

- What are the potential failure modes, how would you detect them, and what are the quality and patient safety consequences if you don’t?

- Can your existing change control and deviation management processes handle the new AI technology?

- Is your data fit for purpose? Fit for purpose, both for training and ongoing operation?

- What is your human oversight model – human in the loop? Is it realistic?

- Are the outputs from the AI model explainable and transparent?

- How will the AI technology increase cybersecurity and data privacy risks? How can you mitigate those risks?

- Do you have the internal capability to sustain (not just deploy) the AI technology in a controlled and validated state?

Conclusion

A lot of the talk around AI covers topics such as when the pharmaceutical industry will embrace it and how AI will change existing processes and workflows. Those are largely questions for the future. The more important and relevant question for now, however, is what does good AI adoption look like? Defining that is where risks can be minimized, and lasting value can be derived from AI in manufacturing and quality control.

We can help you with this process at Westbourne with our compliance, IT, and pharma laboratory expertise. Contact us to arrange a consultation.

Latest Insights

Efficient and Cost-Effective Computer System Validation – Here’s How

Validating computer systems (software, equipment, or IT Infrastructure) is necessary in pharmaceutical facilities to ensure compliance and protect patient safety. The challenge is that traditional computer system validation (CSV) methods are resource-intensive,...

Identifying and Mitigating the Risks and Challenges of Pharmaceutical Digital Transformation

Digital transformation projects and initiatives deliver considerable benefits to companies in the pharmaceutical...

Continuous Oversight: a Key Principle for Implementing AI in Pharmaceutical Manufacturing and Quality Control

Artificial Intelligence (AI) is one of the hottest topics in business right now as executives try to take advantage of...

17 Features You Need in a Global Service Desk

In today's digitally connected world, a global service desk is more than just a support function. It’s the backbone of...

A Complete Guide to Computer System Validation

Computer System Validation, or CSV, is a modern, risk-based methodology that confirms software used in pharmaceutical...